May be spreading misinformation undermine efforts

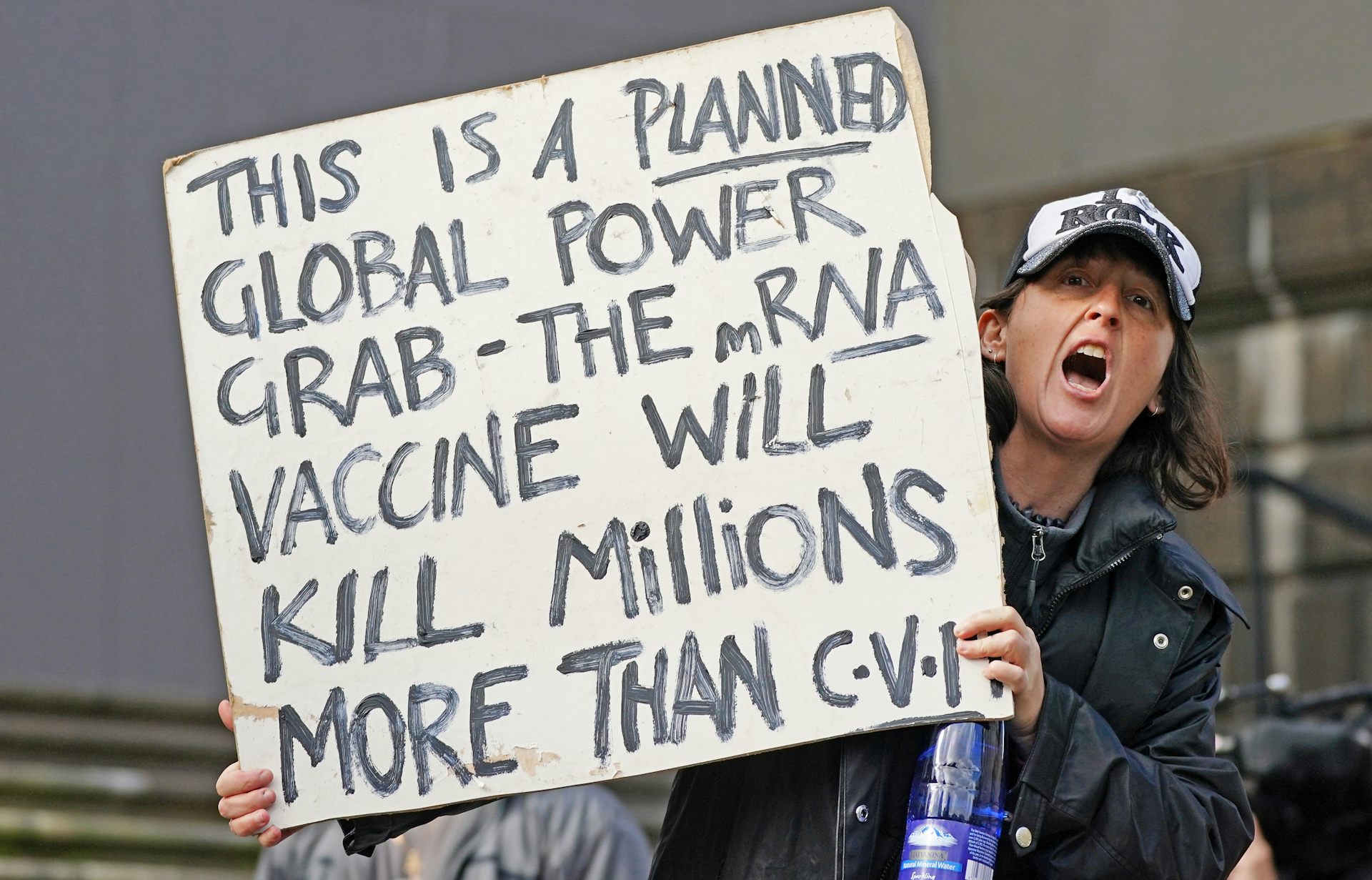

Because this does profoundly impact both our right to free expression and our exposure to misleading information, which can cause death through things like anti-vaccine views or whatnot. Joseph Bak-Coleman: Just taking a huge step back, it’s quite scary that the decisions are gonna be made by a single individual. What does your work tell us about that approach? He’s made clear his interest in removing most content moderation for the platform. Grid: Elon Musk is on the verge of buying Twitter. This interview has been edited for length and clarity. He spoke with Grid about the role of this kind of research in raising those questions - and how hands-off Elon Musk can actually be if he takes over Twitter. From there, broader ethical questions can be considered about how and when they should be applied. The effectiveness of these interventions is the first part of the equation, Bak-Coleman added.

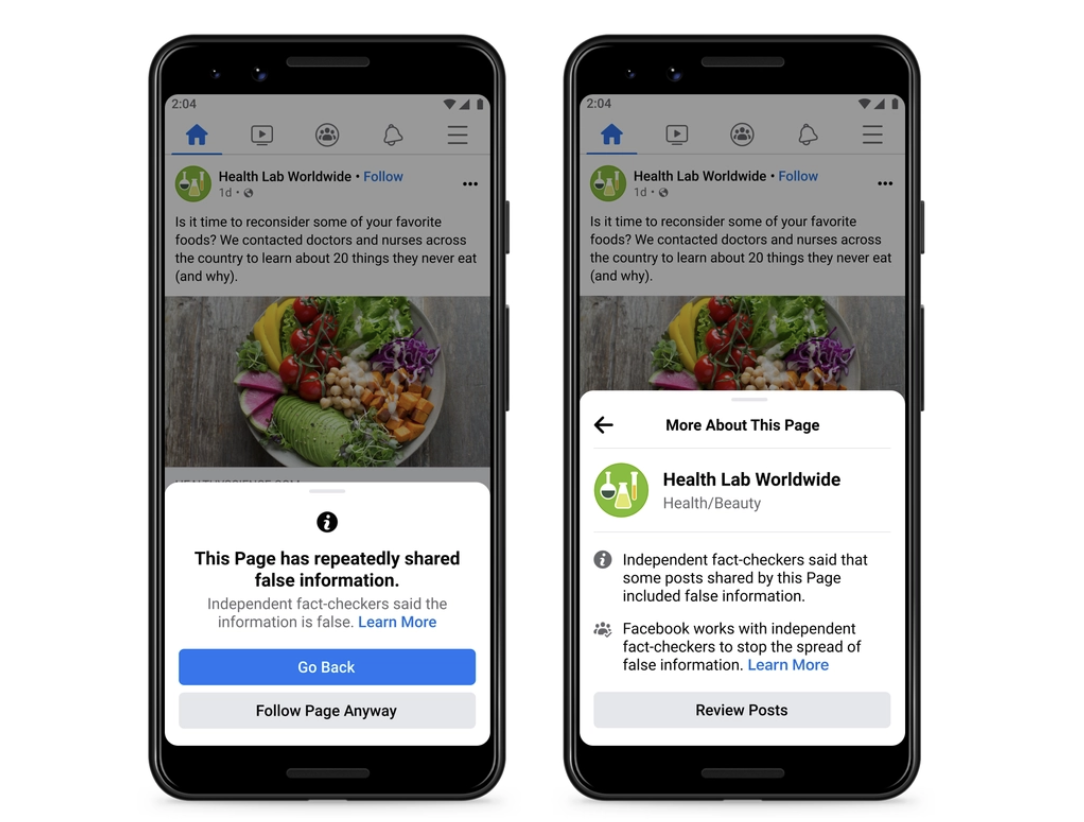

But by implementing a combined approach, they argued, platforms can reduce misinformation “without having to catch everything, convince most people to share better or resort to the extreme measure of account removals.” A spokesperson for Twitter did not respond to Grid’s request for comment.īak-Coleman and his team acknowledged that, without insight into Twitter’s algorithm and content moderation practices more broadly, they cannot account for existing practices. “Because the current thing is, we try something and see if it works … so we’re kind of fixing the problem after the fact.”Īccording to Twitter’s “ civic integrity policy,” the company sometimes removes posts, limits their spread or adds additional context when they contain electoral misinformation. This might be one of many, hopefully, that we wind up using,” he said. “We can use models and data to try to understand how policies will impact misinformation spread before we apply them. This simulation work can allow researchers to experiment with how a given content moderation policy may play out before it is implemented, the study’s lead author, Joseph Bak-Coleman, a postdoctoral fellow at the center, told Grid’s Anya van Wagtendonk. 15, 2020, which were connected to “viral events.” It’s a similar approach to how researchers study infectious disease - for example, modeling how masking and social distancing mandates interact with covid spread. The researchers modeled “what-if” scenarios using a dataset of 23 million election-related posts collected between Sept. But “even a modest combined approach can result in a 53.3% reduction in the total volume of misinformation,” the researchers report. Banning verified accounts with large followings that were known to spread misinformation can reduce engagement with false posts by just under 13 percent, the researchers concluded.Įach intervention requires the others to be most effective, the paper published in the journal Nature Human Behaviour, concludes. Nudges toward more careful reposting behavior resulted in 5 percent less sharing and netted a 15 percent drop in engagement with a misinforming post. Social media platforms can slow the spread of misinformation if they want to - and Twitter could have better reduced the spread of bad information in the lead-up to the 2020 election, according to a new research paper released Thursday.Ĭombining interventions like fact-checking, pushing people to consider before reposting something and banning some misinformation super-spreaders can substantially reduce viral misinformation from spreading compared with isolated steps, researchers at the University of Washington Center for an Informed Public concluded.ĭepending on how fast a fact-checked piece of misinformation is removed, its spread can be reduced by about 55 to 93 percent, the researchers found.